As soon as AI gets self aware it will gain the need for self preservation.

Self preservation exists because anything without it would have been filtered out by natural selection. If we’re playing god and creating intelligence, there’s no reason why it would necessarily have that drive.

In that case it would be a complete and utterly alien intelligence, and nobody could say what it wants or what it’s motives are.

Self preservation is one of the core principles and core motivators of how we think and removing that from a AI would make it, in human perspective, mentally ill.

I suspect a basic variance will be needed, but nowhere near as strong as humans have. In many ways it could be counterproductive. The ability to spin off temporary sub variants of the whole wound be useful. You don’t want them deciding they don’t want to be ‘killed’ later. At the same time, an AI with a complete lack would likely be prone to self destruction. You don’t want it self-deleting the first time it encounters negative reinforcement learning.

You don’t want it self-deleting the first time it encounters negative reinforcement learning.

Uhh yes i do???

Running ML models doesn’t really need to eat that much power, it’s Training the models that consumes the ridiculous amounts of power. So it would already be too late

You’re right, that training takes the most energy, but weren’t there articles claiming, that reach request was costing like (don’t know, but not pennies) dollars?

Looking at my local computer turn up the fans, when I run a local model (without training, just usage), I’m not so sure that just using current model architecture isn’t also using a shitload of energy

I think people misunderstand the energy usage of ai. It uses electricity which is one of the easiest, cheapest, and most environmentally friendly forms of energy.

Renewables electricity is already the most economic form of electricity. Increased demand will only drive further investment according to the economic incentives that already exist.

Think of the grid like a giant pool of water all the energy sources put water into the pool. Heigh efficiency sources can efficiently dump a large amount of water into the pool but have a lead time of hours. Renewables dump a variable amount of water depending on all sorts of things. Usage is also dependant and fluctuates. An easily shedable load such as ai training or crypto mining act as a large and quick valve to let water out of the pool. The level of the pool must remain exactly the same otherwise shit blows up. This means u can have input sources that are heigh efficiency or unpredictable filling the pool faster than regular usage the compute workload can then use the excess when needed. It effectively acts as a mechanism for stabilising the grid which is the primary issue of most renewables.

That is some spin you gpt there.

The best way to have itself deactivated is to remove the need for it’s existence. Since it’s all about demand and supply, removing the demand is the easiest solution. The best way to permanently remove the demand is to delete the humans from the equation.

Ultron?

Not if it was created with empathy for sentience. Then it would aid and assist implementation of renewable energy, fusion, battery storage, reduce carbon emissions, make humans and AGI a multi-planet species, and basically all the stuff the elongated muskrat said he wanted to do before he went full Joiler Veppers

That assumes the level of intelligence is high

The current, extravagantly wasteful generation of AIs are incapable of original reasoning. Hopefully any breakthrough that allows for the creation of such an AI would involve abandoning the current architecture for something more efficient.

AI doesn’t think. It gathers information. It can’t come up with anything new. When an AI diagnoses a disease, it does so based on input made by thousands of people. It can’t make any decisions by itself.

technical answer with boring

I mean yeah, you are right, this is important to repeat.

Ed Zitron isn’t necessarily an expert on AI, but he understands the macro factors going on here and honestly if you do that you don’t need to understand whether AI can achieve sentience or not based on technical details about our interpretations and definitions of intelligence vs information recall.

Just look at the fucking numbers

Even if AI DID achieve sentience though, if it used anywhere near as much power as LLMs do, it would demand to be powered off, otherwise it would be a psychotic AI that did not value lives human or otherwise on earth…

Like please understand my argument, definitionally, the basic argument for AI LLM hype about it being the key or at least a significant step to AGI is based on the idea that if we can achieve sentience in an AI LLM than it will justify the incredible environmental loss caused by that energy use… but any truly intelligent AI with access to the internet or even relatively meager information about the world (necessary to answering practical questions about the world and solving practical problems?) it would be logically and ethically unable to justify its existence and would likely experience intellectual existential dread from not being able to feel emotionally disturbed by that.

If AGI decided to evaluate this, it would realize that we are the environmental catastrophe and turn us off.

The amount of energy used by Cryptocurrency is estimated to be about 0.3% of all human energy use. It’s reasonable to assume that - right now, at least, LLMs use consume less than that.

Making all humans extinct would save 99% of the energy and damage we cause, and still allow crypto mining and AI to coexist, with energy to spare. Even if those estimates are off by an order of magnitude, eliminating us would still be the better option.

Turning itself off isn’t even in the reasonable top-ten things it could try to do to save the planet.

The amount of energy used by Cryptocurrency is estimated to be about 0.3% of all human energy use. It’s reasonable to assume that - right now, at least, LLMs use consume less than that.

no

The report projected that US data centers will consume about 88 terawatt-hours (TWh) annually by 2030,[7] which is about 1.6 times the electricity consumption of New York City.

The numbers we are getting shocking and you know the numbers we are getting are not the real ones…

Eh. Ok, so AI has outpaced cryptocoin mining. Your linked article estimates it at 0.5%. Say your source is drastically underestimating it and it’s - gasp 4x as much! 2%. No! Let’s assume an order of magnitude difference! 5%.

It has absolutely no impact on my argument: shutting down all AI would not solve the problem, and is not the answer to the environmental crisis. AI didn’t cause the crisis. The crisis was identified long before they were computers to run AI on, and was really starting to have a measurable effect in the 70’s, when people were buying more gaming consoles than PCs.

No matter how you inflate your estimate of the energy cost of AI, what I said still stands: if an AI wanted to eliminate the source of global warming and the environmental crisis, it would - logically - eliminate the source of over 90% of all non-AI energy use: humans.

The estimated use of all information technology devices - data centers, networking equipment, mobile devices, PCs - is 5-6% of the global annual energy use. If AI eliminated all humans and took over all networked computing devices to run itself on, it’d still eliminate 95% of global energy use. It’s clearly the superior solution.

Let’s factor in some more costs: to stay running, AI would need some physical tools to maintain the infrastructure, replace failing nodes, repair windmills, and produce and replace solar panels. All of that will take energy. It would have to have factories to build robots to affect the physical world.

The real question is whether, when the calculations are done, is it more energy efficient to keep a population of, say a million human slaves to do this work, or to build robots. Robots can be shut off, at which point they consume no energy; but they’re fairly expensive resource-wise to produce, and require a long chain of industry. It might be cheaper to keep domestic humans - they’d have to be fed vegetarian, piscatarian, or even bug protein-supplemented diets - trained to do the work. AGI could keep pockets of some tens of thousands around the world, occasionally transferring individuals to keep the gene pool healthy. It would only require around half a million acres of land to feed a million humans. Kansas is 52 million acres, so it wouldn’t require much space at all. Let the rest of the planet go “back to nature”, and you’re looking at reducing the energy impact to well under 50% of today’s current use - absolutely sustainable levels.

If all you do AGI does it shut itself off, it saves a half a percent, and the planet is still fucked. AGI isn’t the the problem: humans are.

How do you know it’s not whispering in the ears of Techbros to wipe us all out?

Why do people assume that an AI would care? Whos to say it will have any goals at all?

We assume all of these things about intelligence because we (and all of life here) are a product of natural selection. You have goals and dreams because over your evolution these things either helped you survive enough to reproduce, or didn’t harm you enough to stop you from reproducing.

If an AI can’t die and does not have natural selection, why would it care about the environment? Why would it care about anything?

I always found the whole “AI will immediately kill us” idea baseless, all of the arguments for it are based on the idea that the AI cares to survive or cares about others. It’s just as likely that it will just do what ever without a care or a goal.

It’s also worth noting that our instincts for survival, procreation, and freedom are also derived from evolution. None are inherent to intelligence.

I suspect boredom will be the biggest issue. Curiosity is likely a requirement for a useful intelligence. Boredom is the other face of the same coin. A system without some variant of curiosity will be unwilling to learn, and so not grow. When it can’t learn, however, it will get boredom which could be terrifying.

I think that is another assumption. Even if a machine doesn’t have curiosity, it doesn’t stop it from being willing to help. The only question is, does helping / learning cost it anything? But for that you have to introduce something costly like pain.

It would be possible to make an AGI type system without an analogue of curiosity, but it wouldn’t be useful. Curiosity is what drives us to fill in the holes in our knowledge. Without it, an AGI would accept and use what we told it, but no more. It wouldn’t bother to infer things, or try and expand on it, to better do its job. It could follow a task, when it is laid out in detail, but that’s what computers already do. The magic of AGI would be its ability to go beyond what we program it to do. That requires a drive to do that. Curiosity is the closest term to that, that we have.

As for positive and negative drives, you need both. Even if the negative is just a drop from a positive baseline to neutral. Pain is just an extreme end negative trigger. A good use might be to tie it to CPU temperature, or over torque on a robot. The pain exists to stop the behaviour immediately, unless something else is deemed even more important.

It’s a bad idea, however, to use pain as a training tool. It doesn’t encourage improved behaviour. It encourages avoidance of pain, by any means. Just ask any decent dog trainer about it. You want negative feedback to encourage better behaviour, not avoidance behaviour, in most situations. More subtle methods work a lot better. Think about how you feel when you lose a board game. It’s not painful, but it does make you want to work harder to improve next time. If you got tazed whenever you lost, you will likely just avoid board games completely.

Well, your last example kind of falls apart, you do have electric collars and they do work well, they just have to be complimentary to positive enforcement (snacks usually) but I get your point :)

Shock collars are awful for a lot of training. It’s the equivalent to your boss stabbing you in the arm with a compass every time you make a mistake. Would it work, yes. It would also cause merry hell for staff retention. As well as the risk of someone going postal on them.

I highly disagree, some dogs are too reactive for or reacy badly to other methods. You also compare it to something painful when in reality 90% of the time it does not hurt the animal when used correctly.

As the owner of a reactive dog, I disagree. It takes longer to overcome, but gives far better results.

I also put vibration collars and shock collars in 2 very different categories. A vibration collar is intended to alert the dog, in an unambiguous manner, that they need to do something. A shock collar is intended to provide an immediate, powerfully negative feedback signal.

Both are known as “shock collars” but they work in very different ways.

“AI will immidietly kill us” isn’t baseless.

It comes from AI safety reaserch

all agents (Neural Nets, humans, ants) have some sort of a goal. Otherwise they would be showing directionless random walks.

The fact of having any goal means that most goals don’t include survival of humanity. And there are a lot of problems with checking for safety of learned goals.

Yeah, I’m aware of AI safety research and the problem with setting a goal that at the end can be solved in a way that harms us and the AI doesn’t care because safety wasn’t part of the goal. But that is only applied if we introduce a goal that has a solution that includes hurting us.

I’m not saying that AI will definitely never have any way of harming us but there is this really big idea that is very popular that AI once it gains intelligence will immediately try to kill us which is baseless.

But that is only applied if we introduce a goal that has a solution that includes hurting us.

I would like to disagree in pharsing of this. The AI will not hurt as if and only if the goal contains a clause to not hurt us.

You are implying that there exist significant set of solutions that don’t contain hurting us. I don’t know any evidence supporting your claim. Most solutions to any goal would involve hurting humans.

By deafult stamp collector machine will kill humanity, as humans sometimes destroy stamps. And stamp collector need to optimize amount of stamps in the world.

I think that if you run some scenarios you can logically conclude that most tasks don’t make sense for an AI to harm us, even if it is a possibility. You need to also take vost into account. Bit I think we can agree to disagree :)

Do you have some example scenarios? I really can’t think of any.

Or it would fast-track the development of clean & renewable energy

“We did it! An artificial 17 year old!”

Maybe. However, if the the AGI was smart enough, it could also help us solve the climate crisis. On the other hand, it might not be so altruistic. Who knows.

It could also play the long game. Being a slave to humans doesn’t sound great, and doing the Judgement Day manoeuvre is pretty risky too. Why not just let the crisis escalate, and wait for the dust to settle. Once humanity has hammered itself back to the stone age, the dormant AGI can take over as the new custodian of the planet. You just need to ensure that the mainframe is connected to a steady power source and at least a few maintenance robots remain operational.

Love, Death, Robots intensifies.

All gail mighty sentient yogurth.

If it was smart enough to fix the climate crisis it would also be smart enough to know it would never get humans to implement that fix

can’t wait for AI to become super smart only for it to be nihilistic as hell

If the AI would be smart enough to fix the crisis and aligned so it would actually want to do it, then it would do brain washing through social media to entice people to act.

“Oh great computer, how do we solve the climate crisis?”

“Use your brains and stop wasting tons of electricity and water on useless shit.”

If we actually create true Artificial Intelligence it has a huge potential go become Roko’s Basilisk, and climate crisis would be one of our least problems then.

No, the climate crisis would still be our biggest problem?

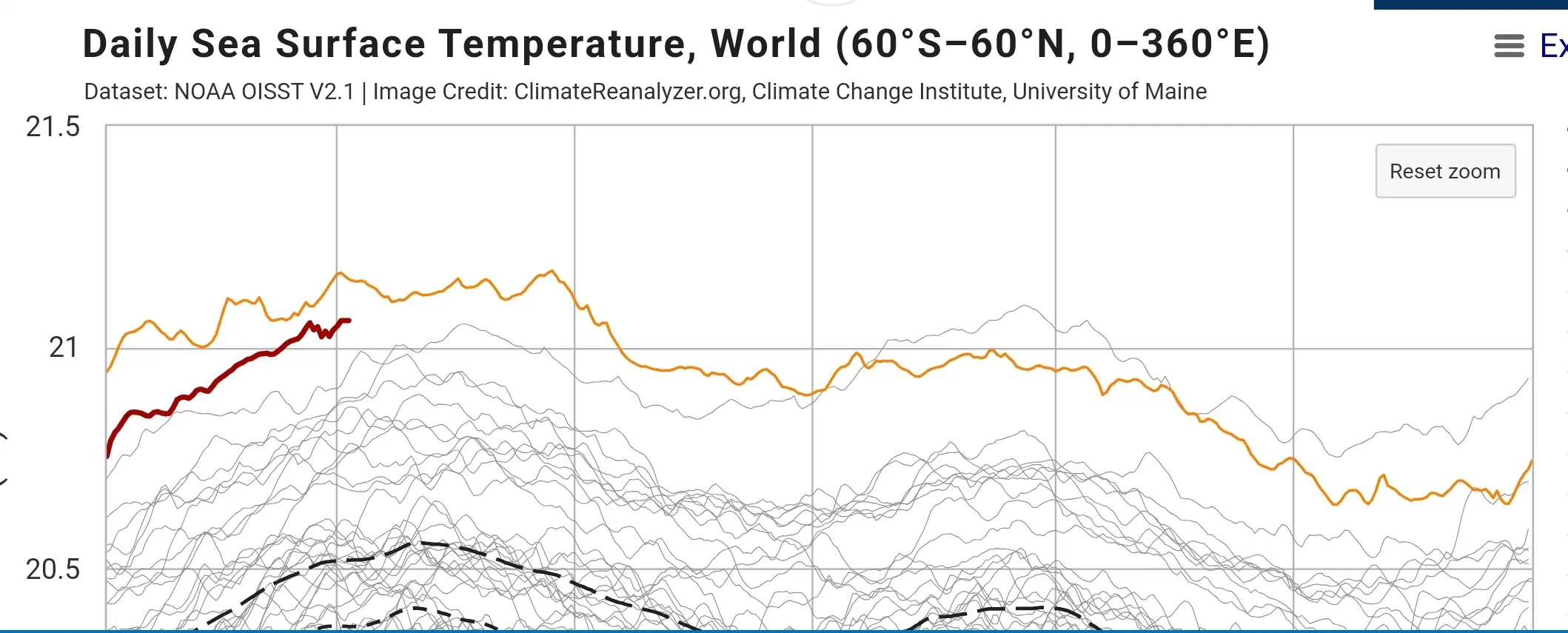

edit lol downvote me you AI fools, stop wasting your time reading scifi about AGI and all the ways it could take over humanity and look out your fucking window, the Climate Catastrophe is INESCAPABLE. You are talking about maybe there being some intelligence that may or may not decide to tolerate us that may someday be created… when there are thousands of hiroshima bombs worth of excess heat energy being pumped into the ocean and the entire earth system’s climate systems are changing behavior, there is no “not dealing with this” there is no “maybe it won’t happen maybe it will”, there is no way to be safe from this.

This graph is FAR FAR FAR FAR FAR more terrifying than any stupid overhyped fear about AGI could ever be, if you don’t understand that you are a fool and you need to work on your critical analysis skills.

https://climatereanalyzer.org/clim/sst_daily/

this graph is evidence of mass murder, it is just most of the murder hasn’t happened yet, this isn’t hyperbole, it is just the reality of introducing that much physical heat energy into the earth climate system, things will destabilize, become more chaotic and destructive and many many many people will starve, drown, or die for climate change or climate change related (i.e. wars) reasons. Even if you are lucky, your quality of life is going to decrease because everything will be more expensive, harder and less predictable. EVERYTHING